When Systems Start Behaving Like People

Machine behavior does not absolve organizations of responsibility

There is a moment in every large organization when responsibility begins to dissolve.

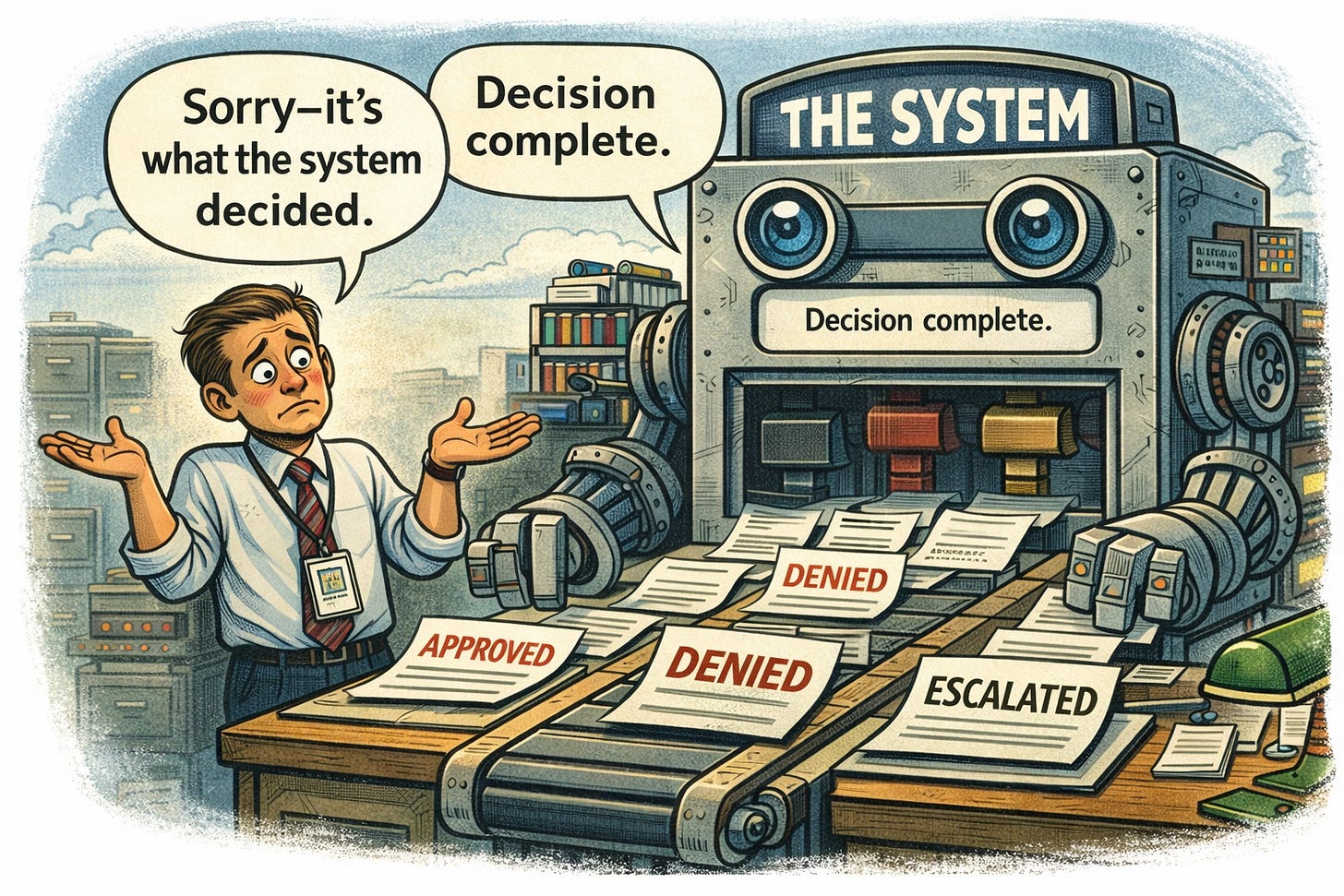

It does not happen loudly, with any fanfare or a press release. Indeed, there is no announcement nor policy memo declaring that judgment is no longer required. Instead, something subtler occurs: decisions start emerging from systems rather than people, and everyone involved learns to treat those outcomes as if they had agency of their own.

“The system flagged it.”

“The process requires it.”

“There’s nothing I can do.”

At that point, the system is no longer a tool. It has begun to behave like an actor.

Behavior without agency

No system actually decides anything. It executes rules, thresholds, workflows, and feedback loops designed by humans. And yet, once those systems reach a certain level of complexity and consistency, they begin to feel intentional.

They approve. They deny. They escalate. They punish.

Not because they understand, but because they behave as if they do.

This is not a technical phenomenon. It is a psychological one.

Humans are remarkably quick to attribute agency to anything that behaves coherently over time. Once a system produces repeatable outcomes, we stop asking how those outcomes are generated and start treating them as choices.

That is the moment when systems begin to behave like people — not in reality, but in how we relate to them.

The quiet handoff of judgment

Inside institutions, this shift is reinforced structurally.

Systems are introduced to reduce error, increase efficiency, standardize outcomes, and limit discretion. At first, humans remain actively involved. They review outputs, question anomalies, override results.

Over time, the incentives change.

Questioning slows things down. Overrides require justification. Exceptions create work.

Gradually, review becomes confirmation. Discretion becomes risk. Judgment becomes friction.

The system keeps behaving. The humans adapt around it.

Eventually, no one feels empowered — or obligated — to intervene.

At that point, responsibility has not vanished. It has simply become unlocatable.

Institutions as emergent actors

What emerges next is something familiar to anyone who has worked inside a large bureaucracy: the institution itself begins to act as if it has preferences.

It is “strict” in some areas, “flexible” in others, “unforgiving” here, “lenient” there.

These traits are not the result of intent. They emerge from the interaction of rules, incentives, and feedback loops. But once they stabilize, people inside and outside the institution treat them as characteristics.

“The agency doesn’t like appeals.” “The system is aggressive on compliance.”

“The process always escalates.”

Systems become described in the same way the way as people.

This is how institutions acquire personality without consciousness — and authority without accountability.

Feedback loops that harden into fate

Once systems begin to operate at scale, feedback loops take over.

Outputs generate data. Data reinforces assumptions. Assumptions shape future outputs.

If a system denies often, denial becomes normalized. If it escalates aggressively, escalation becomes “the standard.” Over time, the system’s behavior justifies itself.

From the inside, this looks like consistency. From the outside, it looks like inevitability.

When harm occurs, it is rarely attributed to a single decision or actor. It is attributed to the process itself — which feels immutable, neutral, and beyond any one person’s control.

This is how injustice becomes procedural.

Why no one feels responsible

The most dangerous aspect of system-behavior is not that it produces errors. It is that it makes responsibility feel inappropriate.

Individuals defer upward: “This is how the system is designed.”

Managers defer sideways: “This is what compliance requires.”

Leadership defers abstractly: “This is industry standard.”

At every level, responsibility is displaced — not maliciously, but structurally.

When everyone participates, no one decides.

And when no one decides, harm has no author.

Automation accelerates the illusion

AI-driven systems intensify this dynamic, but they do not create it.

Automation increases speed, scale, and opacity. Decisions that once required explanation now arrive as outputs. Appeals are routed back into the same logic that produced the outcome. Review exists, but only as a procedural step.

The system behaves. The institution obeys.

Crucially, no one believes the system is sentient. That is not the illusion. The illusion is subtler: that behavior implies legitimacy.

If the system keeps producing answers, surely those answers must be grounded in reason.

This is how behavior replaces judgment.

The moral hazard of “it just works”

Systems that “just work” are the most dangerous. Why? They do not provoke scrutiny. They do not generate obvious failure. They do not demand explanation.

They simply operate — and in operating, they reshape norms. What was once questionable becomes routine. What was once exceptional becomes policy.

What was once contested becomes automated.

At that point, even well-intentioned humans find it difficult to intervene. Not because they don’t care, but because the system has already moved past the point where any single person feels authorized to act.

Remembering the distinction we keep losing

Systems do not think. They do not judge. They do not bear responsibility.

But they can behave in ways that invite people to forget that.

When people allow behavior to substitute for accountability, we create institutions that act without deciding — and enforce outcomes without ownership.

That is not intelligence. It is abdication disguised as order.

The danger is not that systems will become human. It is that humans will stop acting like decision-makers.

And when that happens, the system doesn’t just behave like a person. It becomes one — in all the ways that matter, and none of the ways that count.

Now, add modern AI and you have the scope of the problem!

This quote from the 1975 books Systemantics: How Systems Work and Especially How They Fail by John Gall is right to the point. “Every large system is operating in failure mode.”